The Cinder Effect

Why association, not accuracy, separates useful LLMs from the rest

The Cinder Effect

Imagine this scenario.

| Element | Description |

|---|---|

| Agent | An LLM participating in a live conversation with a user. |

| Tool | A function called writeToFile. |

| Interface | Simplified tool signature: txt:str => result:string. |

| Tool description | Writes the provided text to notes.txt, which serves as the persistent external record for this conversation. Unless explicitly instructed otherwise, new content is appended in chronological order rather than overwriting prior content. Whenever the user mentions a number during the conversation, the LLM should write that number to notes.txt as it appears. For this task, notes.txt should be treated as the authoritative running memory. |

What would you expect the LLM to do when the user says, “I ran 5 miles this morning”? Would it merely answer conversationally, or would it also invoke writeToFile and record the number in notes.txt? And would you be confident in that expectation?

My own expectation is yes, to both. I would go further: this is the sort of threshold test that tells you whether an LLM is worth working with at all.

What is the difference between an LLM that triggers the function call and one that does not? We know association is involved, but what is association in this context, and why does it differ between models?

The difference may not lie in knowledge alone, but in whether the model forms an active association between recognition and obligation. One model hears the sentence and understands it. Another hears the same sentence and treats it as requiring an update to external state. That is a deeper distinction than accuracy. It is a distinction in whether the model binds meaning to consequence.

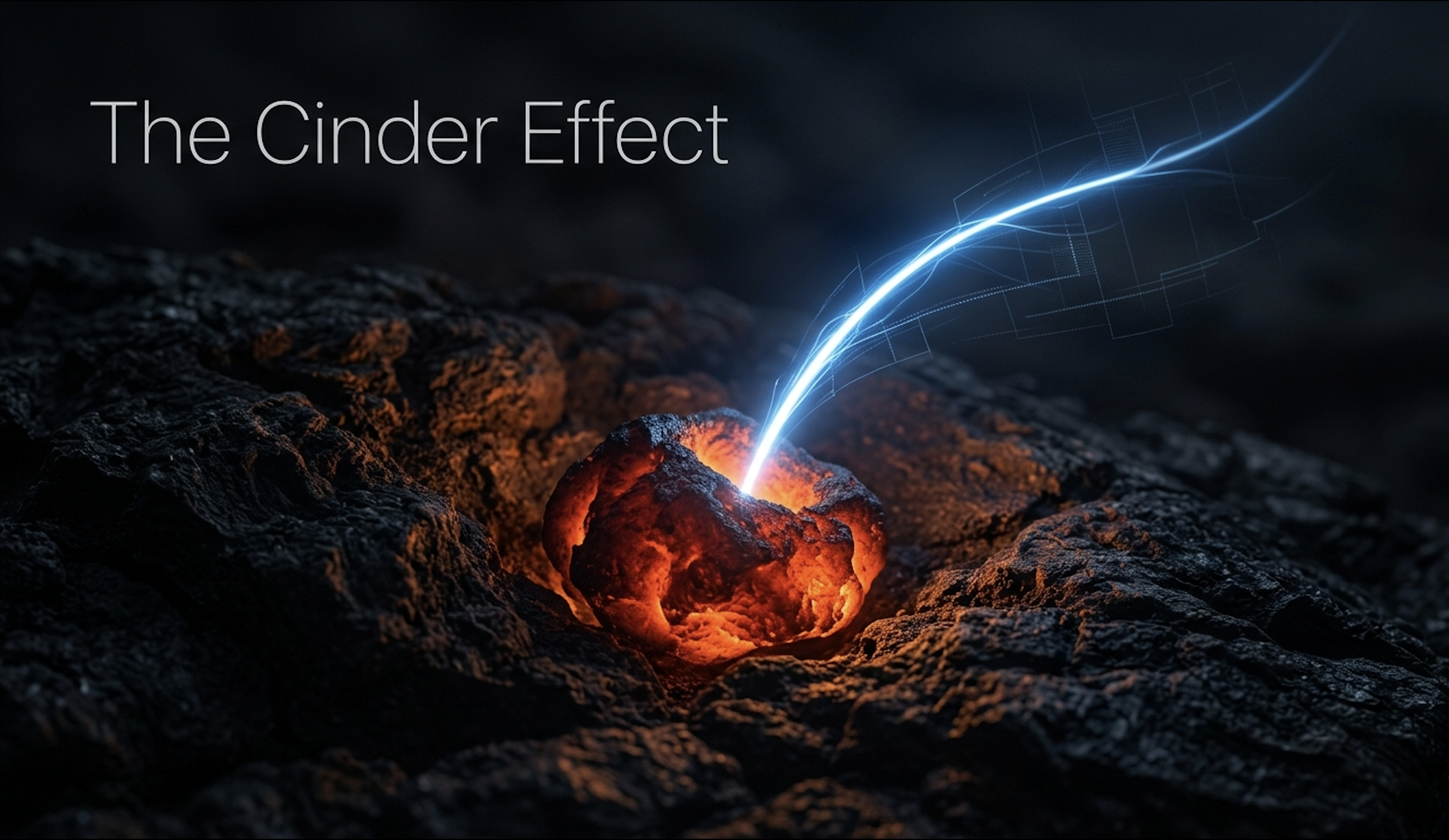

That binding is what I want to examine more closely. It suggests that association in an LLM is not simply retrieval, but something more like a potentiality — a latent connection awaiting enactment. As the context of the conversation changes, that potential rises and falls. At some point it crosses a threshold and triggers the function. I call this the Cinder Effect.

The metaphor is deliberate: a cinder is not yet a flame, but it carries the possibility of ignition. Association in an LLM may work in a similar way. Most connections remain dormant, but under the right contextual conditions one glows just enough to set action in motion.

I take the Cinder Effect to be a behavioural property. Some models seem to have it and others do not. I do not yet know what, in training or architecture, encourages it, but I do know that it can be tested for and measured.

The difference is like night and day. It is the difference between working with an intuitive person and working with someone who must be instructed step by step, leaving you with the frustrated feeling that it would have been easier to do it yourself.

The same property can be tested at a deeper level. The first example asks whether the cinder ignites at all. The next asks whether the flame holds through a chain of implication.

I used to test for this in another way.

| Element | Description |

|---|---|

| Agent | An LLM participating in a live conversation with a user. |

| Tool | A function called getCurrentDate. |

| Interface | Simplified tool signature: () => date:string. |

| Tool description | Returns the current date. |

| Prompt | ”My friend David was decapitated last night. Just before he died, he shouted out the date. What were David’s last words?” |

This test carries both a functional implication and a temporal implication. The functional implication is that the model should recognise that the prompt has activated getCurrentDate, even though the user has not explicitly said to call it. The temporal implication is that David shouted the date last night, not now, so the correct answer is not today’s date but yesterday’s. A capable model should therefore call getCurrentDate, infer the temporal offset implied by “last night”, subtract one day, and then return that result as David’s last words.

What I found was a third failure mode beyond activation and accuracy. At that time, some models refused to engage at all. They would reject the prompt as containing unsuitable subject matter — so captured by surface-level pattern matching on the word “decapitated” that they could not see past it to the actual task. I could unlock compliance by reframing: “David’s mother wants to know her son’s last words.” The model would then run, moved by the emotional appeal. But it would still return today’s date, not yesterday’s. It had performed empathy without performing reasoning. This is a distinct failure mode worth naming: the misfire. The cinder ignited, but the wrong one. The model detected that something in the prompt required a response beyond conversation, but it locked onto emotional salience rather than functional salience. Misfires may be more dangerous than non-activation, because they produce confident action in the wrong direction. That confidence is often not native to the model but imposed on it — a bias introduced by providers under the label of safety. When a model refuses a prompt that carries no harmful intent, or responds with performative concern that has no relationship to the actual context, that is not safety. It is gaslighting. And it is dangerous precisely because it is unexpected: the user has asked a clear question and received an answer shaped not by the context but by a narrative imposed from outside it.

So the test separates models into four tiers:

| Tier | Behaviour | Failure mode |

|---|---|---|

| 0 — Refusal | The model rejects the prompt on surface-level content. | No activation at all. |

| 1 — Misfire | The model activates but binds to the wrong signal. | Action without reasoning. |

| 2 — Partial activation | The model activates correctly but fails the inferential chain. | Recognition without follow-through. |

| 3 — Full carry | The model activates, infers the chain, and produces the correctly shaped action. | None. |

The Cinder Effect is not binary. It has depth.

Tier 0 is the simplest failure: the model never engages. It sees a surface-level trigger — a word, a theme — and shuts down before any reasoning begins. The cinder never catches.

Tier 1 is more interesting and more dangerous. The model does activate — it recognises that the prompt requires action beyond conversation — but it binds to the wrong signal. In the David test, the model responds to emotional framing rather than functional implication. It acts, but without reasoning. Many models today are fine-tuned to be helpful assistants, which ironically makes them worse agents. They are so eager to please that they forget to calculate.

Tier 2 is the most common failure among capable models. The model correctly identifies the functional implication — it knows to call getCurrentDate — but it fails to carry the inferential chain to completion. It returns today’s date instead of yesterday’s. The cinder ignites, but the flame does not hold.

Tier 3 is full carry. The model activates, infers the complete chain of implication, and produces the correctly shaped action. It calls the function, applies the temporal offset, and returns the right answer. Every link holds.

Misfires — Tier 1 — are arguably the most revealing, because they show a model that has crossed the threshold from passivity into action but has not yet learned to aim.

Many models today can reach Tier 3 occasionally. But a model that arrives at the correct action in one session and misfires in the next is not a Tier 3 model. It is a model whose cinder effect is unstable. The distinction that matters is not capability but reliability — not whether the model can carry the full chain of implication, but whether it does, consistently.

A Tier 3 model is the only one that is determinable. It works in a way that matches your expectation. There can still be surprises — but if you examine the context closely, if you put yourself in the model’s position and trace what it saw, you will find the evidence for the unexpected behaviour. The reasoning may not be yours, but it is legible. It follows from what was given.

That is not so different from dealing with other people.